Introduction: The Looming Paradigm Shift

or nearly eighty years, the digital age has been woven from a tapestry of 0s and 1s, the binary language of classical computing. This paradigm has brought us supercomputers capable of quadrillions of calculations per second, artificial intelligence that can interpret human language, and a globally interconnected world. Yet, as we push the boundaries of science and technology, we encounter problems that even our most powerful classical machines find insurmountable—problems that require a fundamentally different way of thinking, a new kind of loom.

Enter Quantum Computing.

Born from the enigmatic laws of quantum mechanics—the physics of the unimaginably small—quantum computing is not merely a faster calculator. It represents a radical rethinking of how computation itself works, promising to unlock solutions to challenges that are currently impossible. From designing new pharmaceuticals to creating unhackable encryption, quantum computing stands at the precipice of a scientific and industrial revolution.

This 2000-word deep dive will take you on a journey into the heart of quantum computing: exploring its bizarre foundational principles, demystifying its complex mechanics, illuminating its transformative applications, and confronting the monumental engineering hurdles that stand between today’s noisy prototypes and tomorrow’s world-changing machines.

Based on the provided text, what are the three main areas of convergence and transformation currently reshaping the advanced computing landscape, and how is quantum computing positioned within this transformation? The three main areas of convergence and transformation currently reshaping the advanced computing landscape are:

- The Rise of Generative AI (including LLMs): This is driving explosive growth in compute requirements, favoring AI-centric GPUs and accelerators.

- Edge Computing and Real-Time Decision Making: This involves expanding processing capabilities at data collection points to support real-time operations.

- Hybrid Architectures and Dark HPC: This represents the growing reliance on blended infrastructure models, combining on-premises, cloud, and edge systems.

Quantum Computing’s Position in the Transformation

Quantum computing is positioned as a complementary technology that is poised to become an integral component of a broader strategy, delivering transformative value across industries within the next 3-4 years.

Specifically, its role is defined by:

- Complementing AI: It offers novel approaches to optimization and is expected to enhance large-scale training workflows for AI and generative AI models.

- Amplifying Edge Computing: Its potential to revolutionize tasks like optimization and decision-making aligns with the real-time processing needs of edge systems, promising to amplify their capabilities through the integration of quantum-enhanced algorithms.

- Integrating into Hybrid Architectures: Quantum resources are increasingly viewed as a vital part of hybrid models (blending on-premises, cloud, and edge systems), preparing organizations to address the emerging challenges of the advanced computing landscape.

The Current Situation: The Age of Verifiable Quantum Advantage (2025)

The field is still defined by the NISQ (Noisy Intermediate-Scale Quantum) era, a term coined to describe machines that have a moderate number of qubits (from dozens to over 1,000) but are prone to high error rates and short coherence times.

However, the major development in late 2025 is the shift from demonstrating mere Quantum Supremacy (doing something impossible for classical computers, but not useful) to demonstrating Verifiable Quantum Advantage (doing something useful, verifiably, faster than classical computers).

Key Technological Breakthroughs

Recent progress is concentrated on improving the quality of qubits and their control:

- Verifiable Quantum Advantage: In a major recent milestone, Google announced a breakthrough with its Willow quantum chip, demonstrating a new algorithm called Quantum Echoes. This algorithm reportedly ran 13,000 times faster than the best classical supercomputer on a specific problem related to modeling atomic interactions in a molecule. Crucially, the result is verifiable—it can be reproduced by other quantum computers or confirmed by experimental physics. This shifts the narrative toward potential applications in drug discovery and materials science.

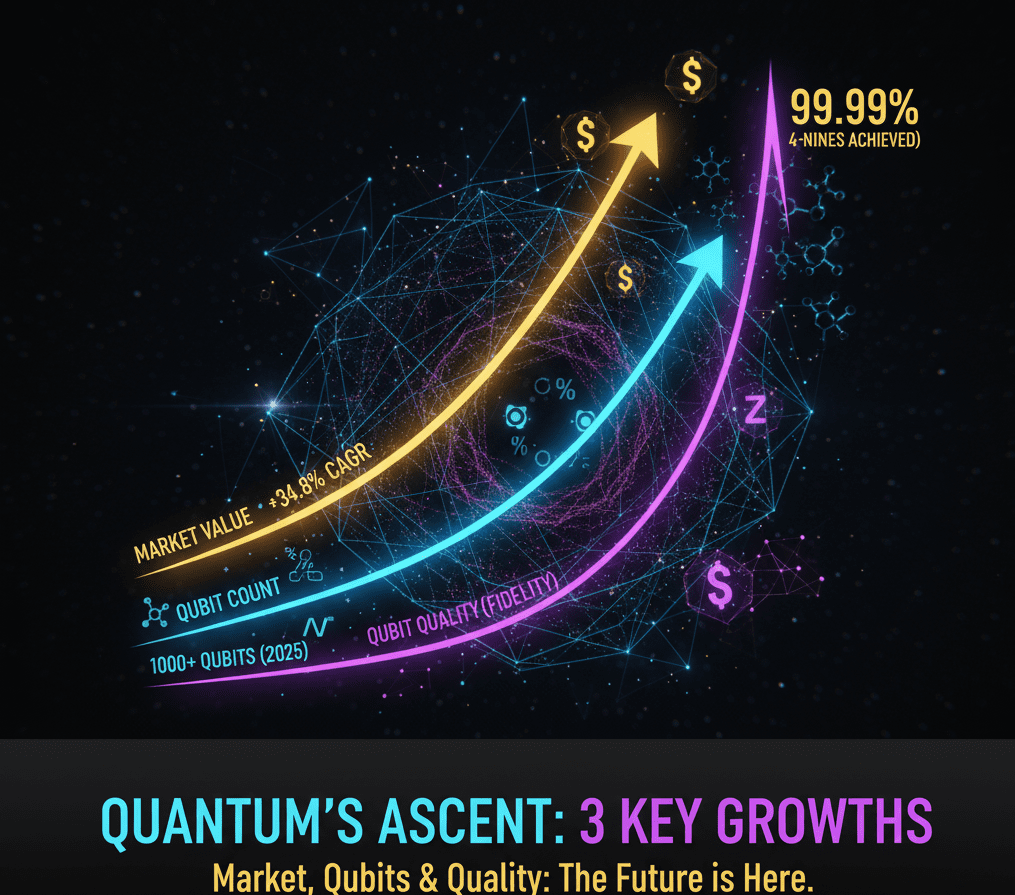

- Four-Nines Fidelity: Companies like IonQ have achieved a world-record 99.99% fidelity (accuracy) in their two-qubit gate operations. This is a crucial technical milestone, as high fidelity means fewer errors accumulate during a complex calculation, significantly reducing the number of physical qubits needed for error correction.

- Increased Qubit Count and Stability: IBM and other major players continue to deploy processors with increasing qubit counts (some exceeding 1,000 physical qubits) while improving their control and architecture to suppress noise. AWS, for instance, introduced its proprietary Ocelot chip designed for enhanced error mitigation.

- Hybrid Quantum-Classical Systems: The dominant trend is the use of cloud-based platforms (like Amazon Braket and IBM Quantum) that integrate quantum processors with high-performance classical computers. This hybrid approach is necessary to run algorithms in the NISQ era, where the classical computer handles the complex control and error mitigation loops.

Industry Focus and Early Commercialization

The industry’s focus is narrowing to the most quantum-suitable problems:

- Quantum Chemistry: This is the killer app. Companies are investing heavily in using NISQ devices for molecular simulation to accelerate R&D in pharmaceuticals and new material design.

- Financial Services: Early engagement is centered on optimization problems, such as portfolio risk analysis and fraud detection, which have huge commercial value even with minor quantum improvements.

- PQC (Post-Quantum Cryptography): Government agencies and financial institutions are actively racing to adopt new cryptographic standards that will be secure against future fault-tolerant quantum computers (Shor’s algorithm threat). This migration is a massive, multi-year, multi-billion-dollar project.

Quantum Computing’s Evolution and Growth Rate

The growth of the quantum computing industry is two-fold: an exponential curve in technological capability (Moore’s Law for qubits) and a high CAGR (Compound Annual Growth Rate) in market value and investment.

1. The Growth Rate in Market Value

The global quantum computing market is experiencing aggressive growth, though from a relatively small base:

- Current and Projected Growth: The market was valued at approximately $1.16 billion in 2024 and is projected to grow to over $12.6 billion by 2032, exhibiting a robust CAGR of around 34.8% during that forecast period.

- Investment Drivers: This growth is fueled by massive R&D spending from major tech companies (IBM, Google, Microsoft) and significant government investment, particularly in the US, EU, and China, which view quantum technology as a key national security and economic priority.

- Stock Volatility: Stocks of pure-play quantum companies (like IonQ and Rigetti) often see high volatility, driven by both genuine technical milestones (like the “four-nines” fidelity) and speculation on government contracts or major corporate partnerships.

2. The Evolution of Hardware Capability

Hardware evolution is tracked not just by the number of qubits, but by the overall quality and stability, which is far more important.

| Metric | NISQ Era (Current) | Fault-Tolerant Era (Goal) | Evolution in 2025 |

| Qubit Count (Physical) | 100 to 1,000+ | Millions | Steady increase. IBM has deployed 1,121-qubit processors. |

| Two-Qubit Fidelity (Accuracy) | 99.7% to 99.9% | 99.9999% | Major Leap: Achieved 99.99 fidelity in some trapped-ion systems. |

| Error Correction | Early, high-overhead prototypes | Full, resource-efficient logical qubits | Progress in suppressing errors below the threshold needed for useful computation. |

| Key Output | Verifiable Quantum Advantage on narrow, specific scientific tasks (e.g., Quantum Echoes). | Universal Utility -solving any hard problem exponentially faster. |

The Roadblocks: What Slows Progress

The high growth rate is necessary because the obstacles remaining are colossal:

- The Error Correction Wall: The biggest single roadblock remains the stability of the qubit. Creating just one highly stable, logical qubit requires chaining together thousands of noisy physical qubits. Until this ratio dramatically improves, quantum computers will be limited to short, simple algorithms.

- Decoherence: The extreme fragility of quantum states forces hardware into cryogenics or high-vacuum environments, making the machines massive, difficult to operate, and expensive to scale.

- Algorithmic Development: The number of known quantum algorithms that offer a true, exponential speedup over classical algorithms is still small. The field needs more breakthroughs in quantum software to fully utilize the hardware once it becomes available.

In summary, the current situation is one of accelerating maturation. Quantum computing has left the purely theoretical stage and is now in a high-stakes engineering and commercial race. The growth rate reflects the massive investment driven by the realization that quantum utility while not yet universal is much closer for specific, high-value problems than previously thought, making the next five years critical for the industry.

The Quantum Alphabet—Bits vs. Qubits

To understand quantum computing, we must first understand its basic unit of information.

The Classical Bit: A Definitive Choice

Imagine a light switch. It’s either ON or OFF. This binary state 0 or 1 is the essence of a classical bit. All classical computers, from your smartphone to a supercomputer, process information by manipulating these definitive 0s and 1s. To store or process more information, you need more bits, and each bit adds linearly to the machine’s capacity. A classical computer with N bits can only represent one of the possible states at any given moment.

The Quantum Qubit: A Universe of Possibilities

A qubit (quantum bit) is the quantum equivalent of a bit, but it’s vastly more powerful because it leverages two bizarre quantum phenomena:

- Superposition (The Spinning Coin): Unlike a classical bit, which must be either 0 or 1, a qubit can exist in a superposition of both states simultaneously. Think of a spinning coin in the air—it’s neither heads nor tails until it lands. A qubit is both 0 and 1, to varying degrees, at the same time. It’s described as a probability distribution of being 0 or 1 when measured.

- Implication: This means a single qubit can hold exponentially more information than a classical bit. A system of just two qubits, through superposition, can represent all four possible combinations (00, 01, 10, 11) simultaneously. With N qubits, you can represent $2^N$ states concurrently, a phenomenon known as quantum parallelism. This is where the exponential power begins.

- Quantum Entanglement (The Eerie Link): This is where things get truly weird. When two or more qubits become entangled, they become inextricably linked, sharing the same quantum fate no matter how far apart they are.

- The Shared Destiny: If you measure the state of one entangled qubit, you instantly know the state of its entangled partner(s), even if they are at opposite ends of the universe. This instantaneous correlation, which Einstein famously called “spooky action at a distance,” is not about communication faster than light but about the inherent interconnectedness of their quantum state.

- Implication: Entanglement is what allows qubits to work together in a highly complex and correlated way. It’s the mechanism through which quantum computers can process incredibly complex calculations involving multiple variables simultaneously, creating a computational space that classical computers simply cannot manage.

Building the Unbuildable-How Quantum Computers Work

Bringing these quantum phenomena into a stable, controlled computational device requires extraordinary engineering. There are several physical implementations for qubits, each with its own advantages and challenges.

1. Superconducting Qubits (The Coldest Computers)

- Principle: These are tiny electrical circuits made from superconducting materials (like aluminum on a silicon chip) that, when cooled to near absolute zero (millikelvins, colder than deep space), lose all electrical resistance. This allows electrons to move without energy loss and exhibit quantum properties.

- Mechanism: Qubits are manipulated using microwave pulses (similar to WiFi signals) to change their quantum state (superposition, entanglement).

- Key Players: IBM, Google, Rigetti.

- Challenges: Extreme refrigeration requirements, sensitivity to noise, and scalability.

2. Trapped Ion Qubits (Levitating Processors)

- Principle: Individual atoms (ions) are stripped of an electron, leaving them positively charged. They are then suspended in a vacuum using electromagnetic fields and manipulated with precisely tuned laser beams.

- Mechanism: The internal energy states of the ions serve as the 0 and 1 states. Lasers are used to put them into superposition, entangle them, and perform operations.

- Key Players: IonQ, Honeywell (now Quantinuum).

- Challenges: Slower gate speeds compared to superconducting qubits, complex laser systems, but known for high fidelity (lower error rates) and better entanglement longevity.

3. Topological Qubits (The “Unbreakable” Qubits)

- Principle: These theoretical qubits are based on quasiparticles (excitations that behave like particles) whose quantum states are defined by their topological properties, meaning they are inherently protected from local disturbances.

- Mechanism: They would be manipulated by “braiding” their paths in a two-dimensional material.

- Key Players: Microsoft.

- Challenges: The existence of these specific quasiparticles (Majorana fermions) has been difficult to definitively prove, making this a highly experimental and long-term approach.

Regardless of the physical implementation, the core challenge remains the same: maintaining the fragile quantum state long enough to perform meaningful calculations.

The Quantum Advantage- What It Can Do

Quantum computers aren’t designed to send emails faster or run video games. Their power lies in tackling specific, computationally intensive problems that are currently impossible for classical computers.

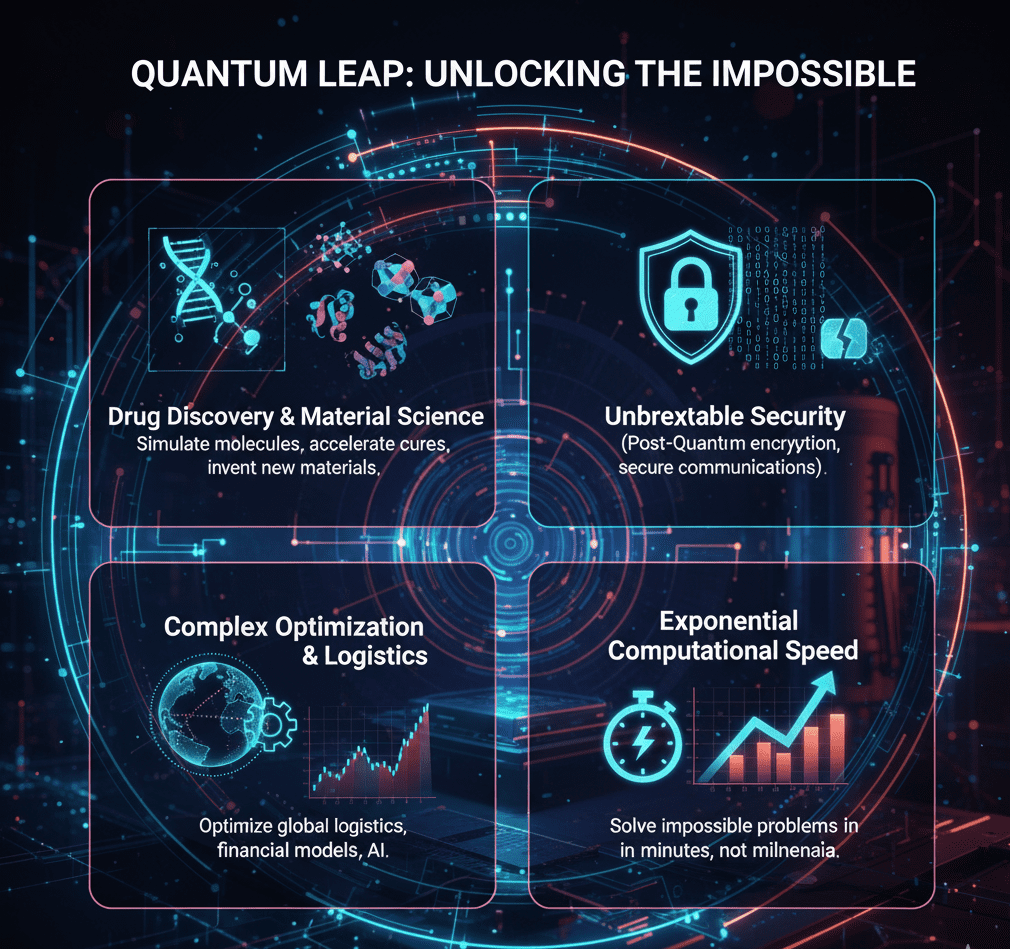

1. Quantum Chemistry and Materials Science: Simulating Reality

This is perhaps the most natural fit for quantum computing, as molecules themselves are quantum systems.

Drug Discovery: Simulating how complex molecules interact with proteins is crucial for developing new drugs. Classically, this involves immense approximations. Quantum computers can simulate these interactions at a fundamental quantum level, enabling the design of highly targeted drugs with fewer side effects and dramatically shortening development times. Imagine designing an HIV or cancer drug from scratch, knowing precisely how it will bind to its target.

Advanced Materials: Developing revolutionary new materials like high-temperature superconductors (for lossless power transmission), advanced catalysts (for more efficient industrial processes), or next-generation battery technologies (e.g., solid-state or lithium-air batteries) hinges on understanding molecular behavior. Quantum simulation can unlock these breakthroughs.

2. Optimization: Finding the Best Path Through Complexity

Many real-world problems involve finding the “best” solution from an almost infinite number of possibilities.

- Logistics and Supply Chains: Imagine optimizing the routes of thousands of delivery trucks, airline schedules, or the entire global shipping network in real-time. Quantum algorithms like Quantum Approximate Optimization Algorithm (QAOA) could find near-optimal solutions far faster than classical methods, saving billions in fuel and resources.

- Financial Modeling: Complex financial markets, portfolio optimization, risk analysis (e.g., Monte Carlo simulations), and fraud detection involve vast datasets and interconnected variables. Quantum algorithms could process these massive datasets to identify patterns and optimal strategies with unprecedented speed and accuracy.

3. Cybersecurity: A Double-Edged Sword

Quantum computing poses both a grave threat and a powerful defense in the realm of cybersecurity.

- The Threat (Shor’s Algorithm): Shor’s Algorithm, developed by Peter Shor in 1994, is a quantum algorithm that can efficiently factor large numbers into their prime components. The security of most of today’s public-key encryption (like RSA) relies on the fact that factoring large numbers is computationally intractable for classical computers. A sufficiently powerful quantum computer running Shor’s Algorithm could theoretically break much of the internet’s current encryption, compromising everything from banking transactions to national security secrets.

- The Defense (Post-Quantum Cryptography – PQC): Recognizing this threat, governments and cryptographers worldwide are racing to develop Post-Quantum Cryptography (PQC). These are new cryptographic algorithms designed to be resistant to attacks from both classical and quantum computers. The transition to PQC is a massive global effort, a “crypto-agility” challenge that requires the world to upgrade its digital infrastructure before a large-scale quantum computer becomes a reality.

4. Artificial Intelligence and Machine Learning

Quantum computing offers potential advantages for specific, computationally intensive tasks within AI.

- Quantum Machine Learning: Algorithms like Quantum Support Vector Machines or Quantum Neural Networks could potentially process massive datasets faster, identify subtle patterns in high-dimensional data, or accelerate training times for complex AI models. This could lead to more powerful image recognition, natural language processing, or personalized recommendations.

The Everest of Engineering—Challenges and the Road Ahead

Despite the immense promise, building a truly useful, fault-tolerant quantum computer is arguably the most demanding engineering challenge in human history. We are currently in the NISQ (Noisy Intermediate-Scale Quantum) era.

1. Decoherence: The Fickle Nature of Quantum States

The biggest enemy of a quantum computer is decoherence. Qubits are incredibly fragile. Even the slightest interaction with their environment—a stray photon, a vibration, a temperature fluctuation—can cause their superposition and entanglement to collapse, leading to errors.

- The Need for Isolation: This is why superconducting qubits require temperatures colder than deep space, and trapped ions are held in a vacuum. Achieving this isolation for a large number of qubits is incredibly difficult.

- Limited “Coherence Time”: Qubits only maintain their quantum properties for a very short duration (microseconds to seconds), limiting the complexity and length of computations that can be performed before errors accumulate.

2. Scalability and Error Correction: The Hardest Problem

Current quantum computers have tens or, at best, a few hundred physical qubits. A machine capable of breaking RSA encryption or simulating complex molecules might need thousands to millions of stable, error-corrected qubits.

- High Error Rates: NISQ devices have error rates as high as 1-10% per operation, which is catastrophically high for complex calculations.

- Quantum Error Correction (QEC): To overcome these errors, researchers use QEC, which involves encoding one “logical” (error-free) qubit across many “physical” (noisy) qubits. The overhead is enormous: it might take 1,000 to 100,000 physical qubits to create just one stable logical qubit. This “error correction wall” is the primary barrier to achieving true fault-tolerant quantum computing.

- Interconnects: As qubit counts increase, the challenge of wiring and controlling individual qubits without interfering with their delicate quantum states becomes exponentially harder.

3. Hardware vs. Software: A Co-Evolution

Building quantum hardware is only half the battle. We also need quantum software.

- New Algorithms: Developing algorithms that effectively utilize superposition and entanglement is a specialized skill. Frameworks like IBM’s Qiskit, Google’s Cirq, and Microsoft’s Q# are helping to make quantum programming more accessible, but it’s still a niche field.

- Optimizing for NISQ: The limitations of NISQ devices mean that current quantum algorithms must be designed to be very short and error-resilient. This requires deep understanding of both the algorithm and the underlying hardware.

4. The Talent Gap

The confluence of quantum mechanics, computer science, and cutting-edge engineering creates a significant demand for interdisciplinary talent. Universities and industry are racing to train the next generation of quantum physicists, engineers, and programmers.

Conclusion: The Horizon of the Impossible

Quantum computing is not an evolutionary step in computing; it is a revolutionary leap. It forces us to confront the limits of classical physics and embrace the strange, probabilistic nature of reality at its most fundamental level.

We are currently in a thrilling, foundational period. While the media often overhypes the immediate capabilities, the scientific progress is undeniable. Researchers are regularly achieving “quantum advantage” on specific, narrow problems, demonstrating that quantum machines can solve certain tasks faster than any classical computer. These breakthroughs, while not yet delivering broadly applicable “useful” applications, prove the fundamental correctness of the quantum computing paradigm.

The journey to building a truly fault-tolerant, universal quantum computer will be long and arduous, fraught with engineering challenges that push the very boundaries of human ingenuity. But the potential rewards—from creating life-saving medicines to unlocking new energy solutions and securing our digital future—are so profound that the effort is not just warranted, but essential.

Quantum computing isn’t about speeding up what we already do; it’s about enabling us to do what we currently cannot even imagine. It is the quest to harness the unseen fabric of the universe to weave a future beyond the bit, where the impossible might just become computationally inevitable. The quantum age is not just coming; it’s already quietly, profoundly, beginning.

Useful Links:

SHARE