The USB-C Port for AI: Why the Model Context Protocol (MCP) is the Future of Intelligent Agents

Large Language Models (LLMs) have achieved a level of brilliance that, just a few years ago, was confined to science fiction. They can write code, compose music, generate hyper-realistic images, and summarize libraries of human knowledge. Yet, for all their genius, they suffer from one crippling limitation: isolation.

An LLM is a static brain encased in a glass box. It only knows what it was trained on which quickly becomes outdated, it cannot access your company’s private systems like GitHub or Salesforce, and it often struggles with the context of the real world—leading to infamous hallucinations. To make a truly intelligent agent, you can’t just make the brain bigger, you must connect it to the living entities , real-time world.

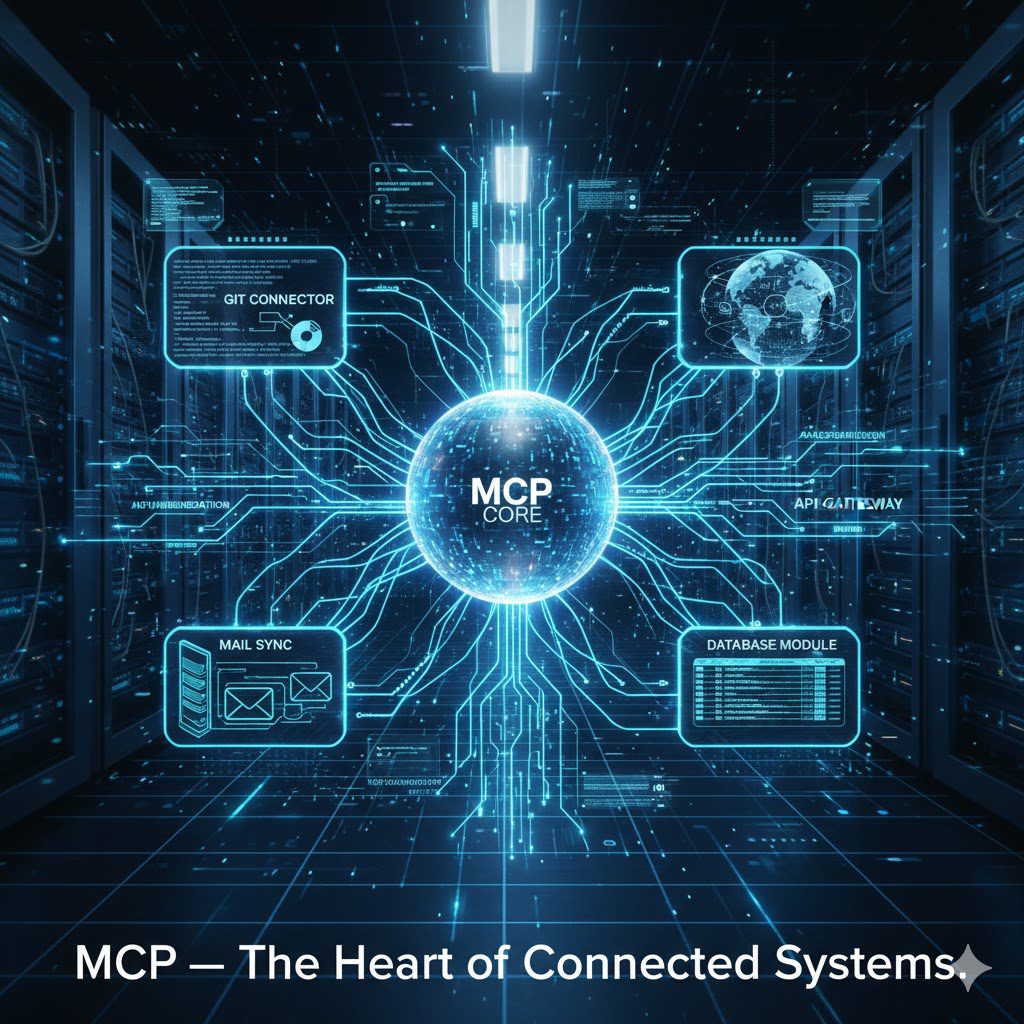

The Challenge of AI Isolation and the N x M Problem

Before the Model Context Protocol, connecting an LLM to the external world was a custom, complicated mess. Every time a developer wanted to connect an LLM (the N models) to a different external tool (the M data sources, like Google Drive, Slack, or a local file system), they had to write a bespoke integration.

This creates the classic N x M Problem, where the number of necessary custom connections grows exponentially, leading to massive friction, security headaches, and slow development.

The Three Walls of Isolation

LLMs, operating in their native, isolated state, hit three walls that limit their utility:

- Knowledge Cutoff: An LLM’s knowledge is frozen at the date its training was completed. It cannot answer questions about real-time events, current stock prices, or the latest company sales figures without outside help.

- Lack of Action: Traditional models are excellent conversationalists, but poor actors. They can tell you how to book a flight, but they can’t actually click the buttons, check availability in a private inventory system, or send a confirmation email.

- The Context Void: Corporate LLM deployments often suffer because the model cannot securely access the single most important source of knowledge: the user’s immediate context (e.g., the code file open in their IDE, the customer ticket they are currently viewing, or the project calendar).

The Model Context Protocol breaks down all three of these walls by standardizing the flow of real-time context and actionable tools between the AI and the host application.

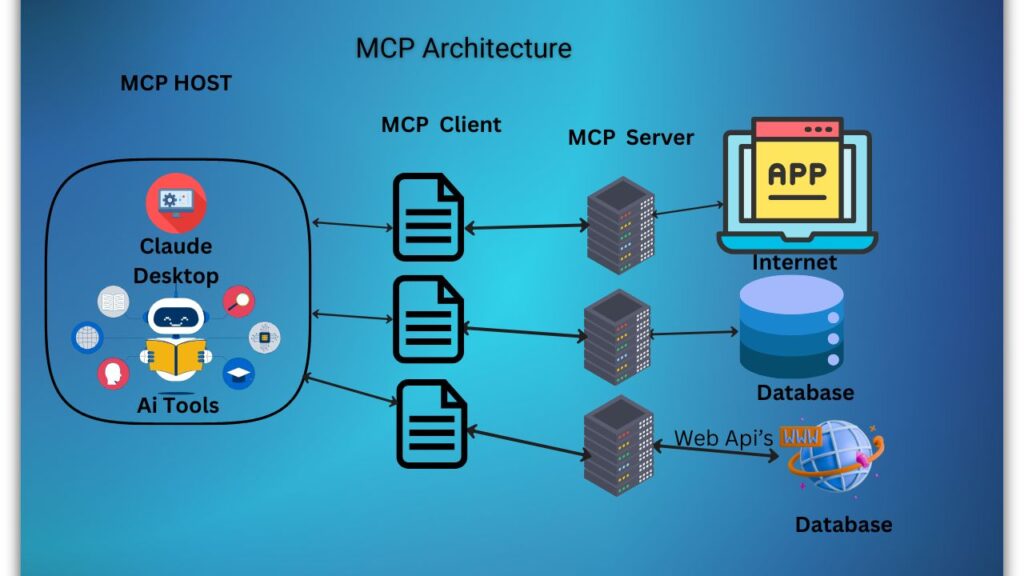

The Architecture of Connection—How MCP Works

MCP (Model Context Protocol) is an open-source standard for connecting AI applications to external systems.Using MCP, AI applications like Claude or ChatGPT can connect to data sources (e.g. local files, databases), tools (e.g. search engines, calculators) and workflows (e.g. specialized prompts)—enabling them to access key information and perform tasks.Think of MCP like a USB-C port for AI applications. Just as USB-C provides a standardized way to connect electronic devices, MCP provides a standardized way to connect AI applications to external systems.

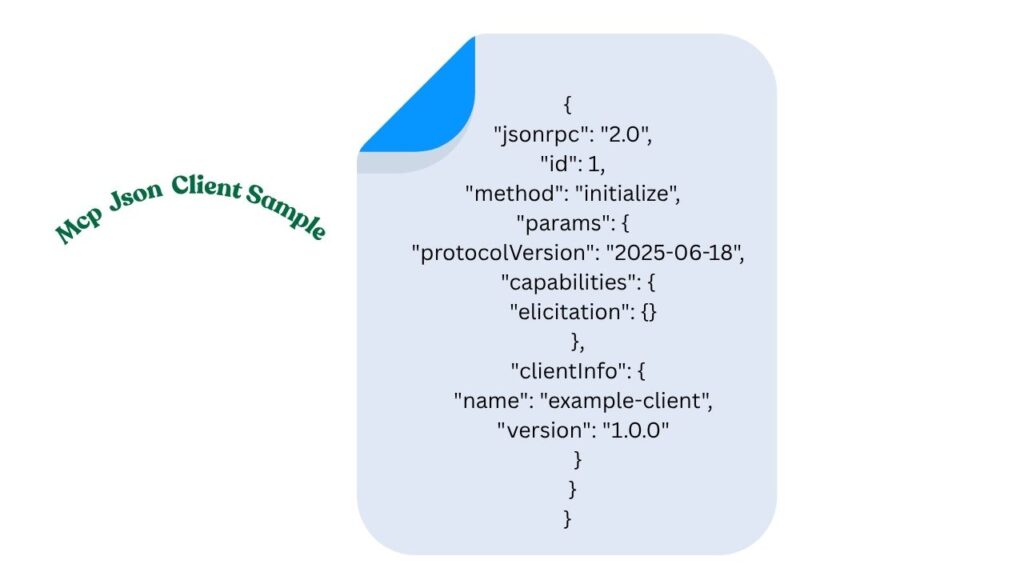

The Model Context Protocol uses a client-server architecture built on JSON-RPC 2.0 messages over standard transport mechanisms (like STDIO for local processes or HTTP+SSE for remote connections). This technical grounding allows it to be lightweight, fast, and universally compatible.

Why does MCP matter?

Depending on where you sit in the ecosystem, MCP can have a range of benefits.

- Developers: MCP reduces development time and complexity when building, or integrating with, an AI application or agent.

- AI applications or agents: MCP provides access to an ecosystem of data sources, tools and apps which will enhance capabilities and improve the end-user experience.

- End-users: MCP results in more capable AI applications or agents which can access your data and take actions on your behalf when necessary.

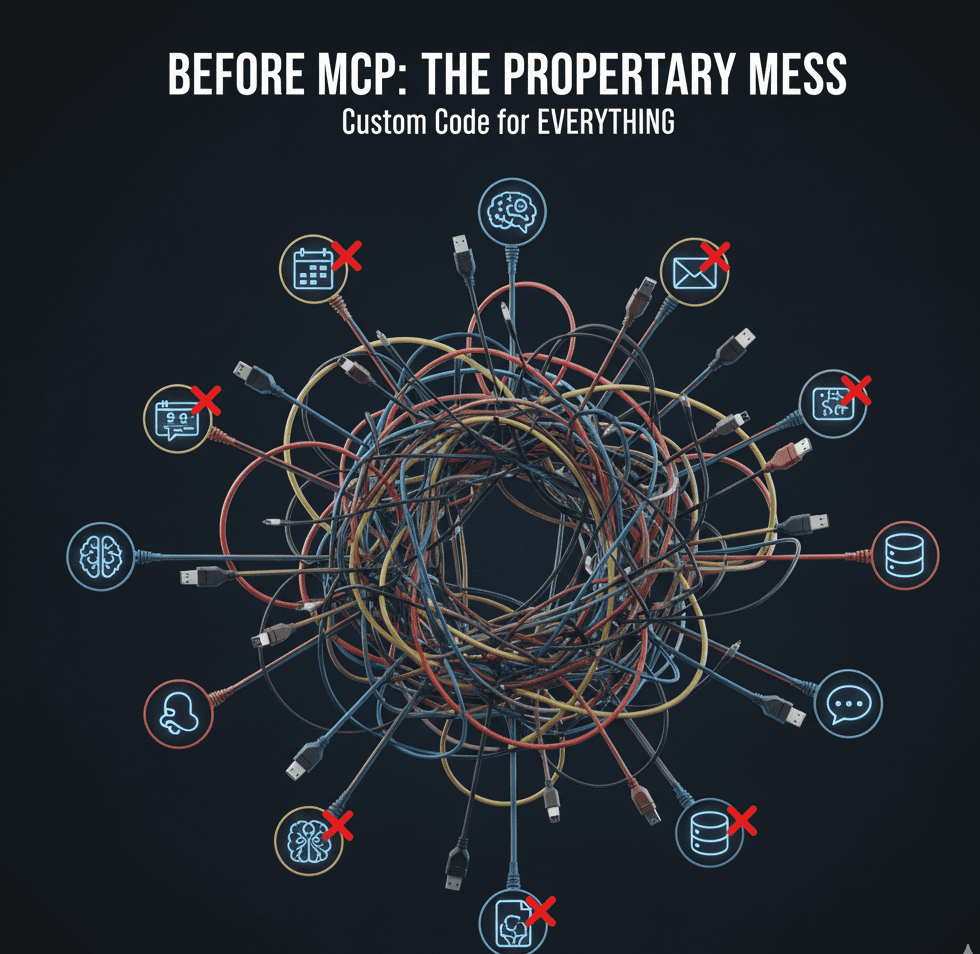

The protocol separates the process into three distinct, standardized components:

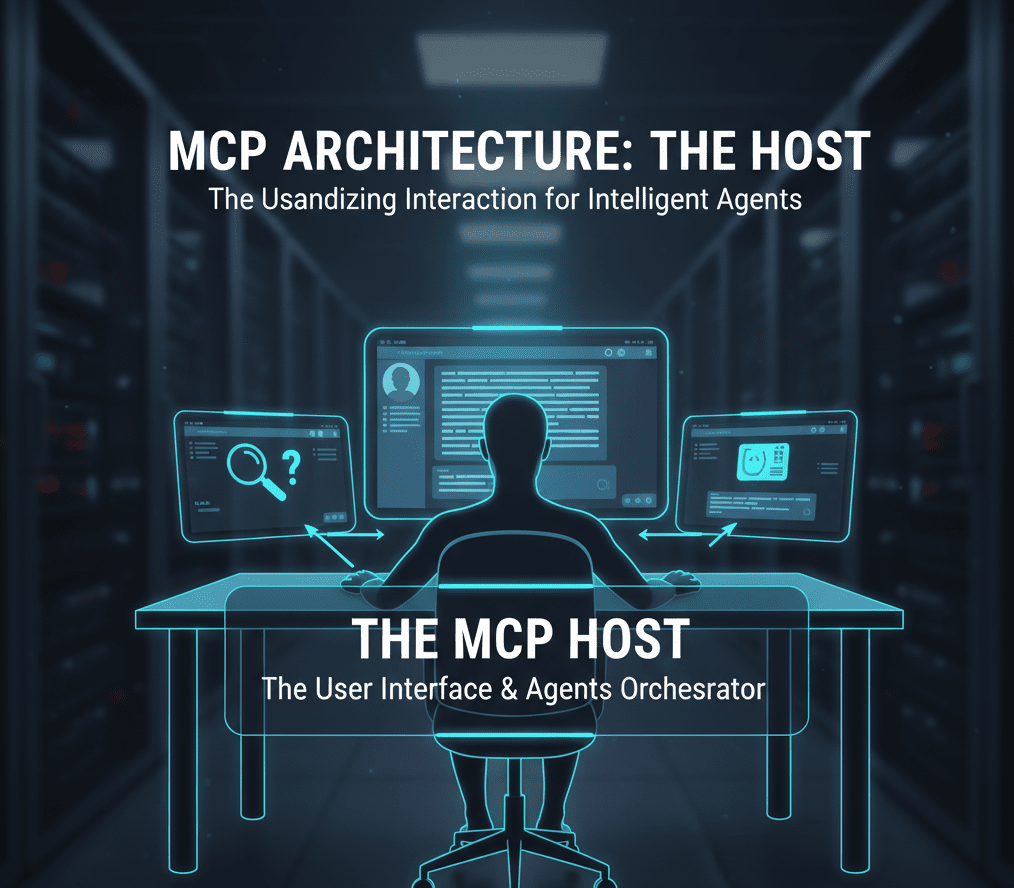

1. The MCP Host (The Environment)

This is the application or environment where the LLM resides and where the user interacts with the AI. Examples include an AI-powered IDE (Integrated Development Environment), a custom enterprise chat interface, or a desktop AI assistant.

- The Host receives the user query (e.g., “Summarize the GitHub pull request for this week’s sprint”).

- The Host initiates the request for external context via its Client.

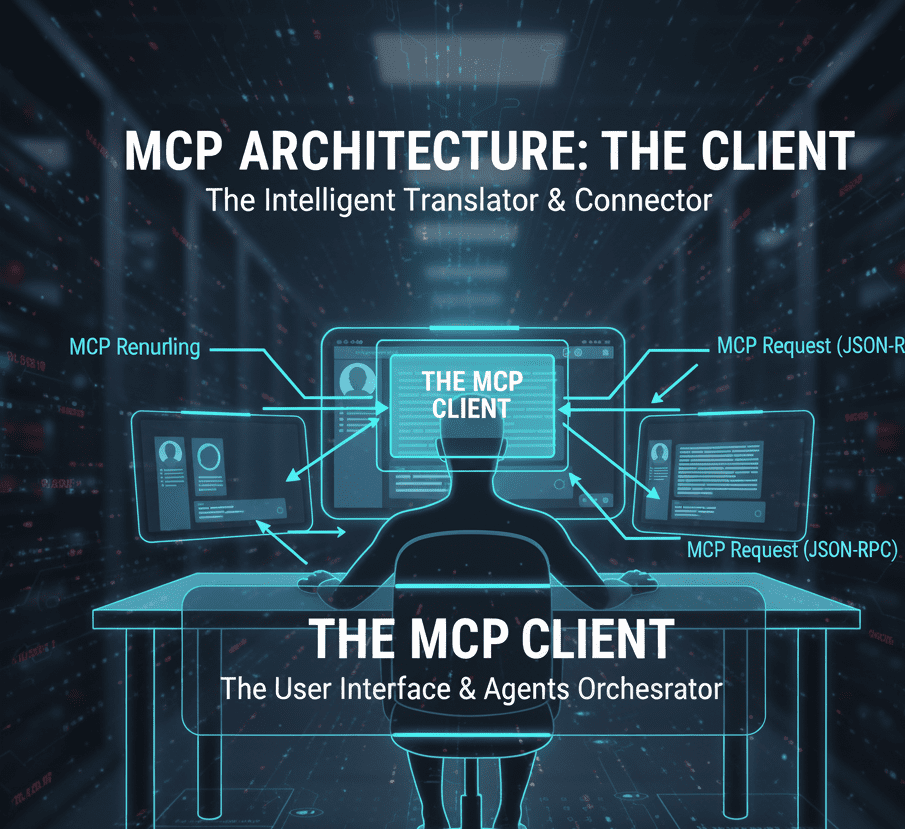

2. The MCP Client (The Broker)

The Client sits within the Host application and acts as the broker or translator.

- It speaks the MCP standard language.

- It is responsible for discovering which external systems (Servers) are available to satisfy the user’s request.

- It handles the crucial job of security, ensuring the AI’s requests are vetted and handled according to user permissions before being passed to the external system.

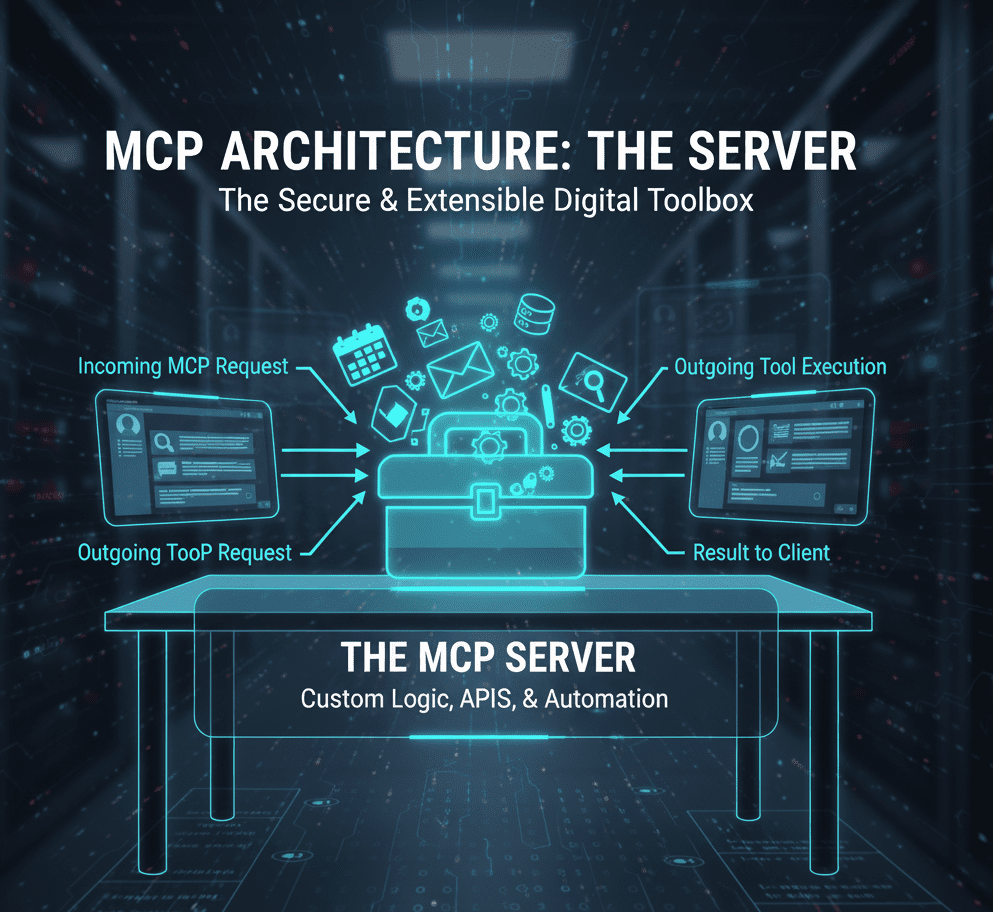

3. The MCP Server (The Connector)

The Server is the external service that actually owns the data or the capability. MCP Servers are developed to connect to specific systems (e.g., a GitHub Server, a PostgreSQL Database Server, or a Google Drive Server).

- The Server receives the standardized request from the Client.

- It executes the necessary function (e.g., pulling the latest code, running a database query, or retrieving a document).

- It returns the resulting context/data to the Client in a format the LLM can easily understand and use.

This modular separation means developers can create a single “GitHub Server” that can be instantly plugged into any LLM application that supports the MCP client. This elegantly solves the N x M problem.

Layers

MCP consists of two layers:

- Data layer: Defines the JSON-RPC based protocol for client-server communication, including lifecycle management, and core primitives, such as tools, resources, prompts and notifications.

- Transport layer: Defines the communication mechanisms and channels that enable data exchange between clients and servers, including transport-specific connection establishment, message framing, and authorization.

Conceptually the data layer is the inner layer, while the transport layer is the outer layer.

Data layer

The data layer implements a JSON-based exchange protocol that defines the message structure and semantics. This layer includes:

- Lifecycle management: Handles connection initialization, capability negotiation, and connection termination between clients and servers

- Server features: Enables servers to provide core functionality including tools for AI actions, resources for context data, and prompts for interaction templates from and to the client

- Client features: Enables servers to ask the client to sample from the host LLM, elicit input from the user, and log messages to the client

- Utility features: Supports additional capabilities like notifications for real-time updates and progress tracking for long-running operations

Transport layer

The transport layer manages communication channels and authentication between clients and servers. It handles connection establishment, message framing, and secure communication between MCP participants.MCP supports two transport mechanisms:

- Stdio transport: Uses standard input/output streams for direct process communication between local processes on the same machine, providing optimal performance with no network overhead.

- Streamable HTTP transport: Uses HTTP POST for client-to-server messages with optional Server-Sent Events for streaming capabilities. This transport enables remote server communication and supports standard HTTP authentication methods including bearer tokens, API keys, and custom headers. MCP recommends using OAuth to obtain authentication tokens.

The transport layer abstracts communication details from the protocol layer, enabling the same JSON-RPC 2.0 message format across all transport mechanisms.

The Capabilities that Define Agentic AI

The real power of the MCP lies in the specific, advanced capabilities it standardizes. It goes far beyond simple API calls, enabling truly interactive and autonomous agentic workflows.

1. Resources and Tools: Context and Action

The most fundamental features provided by an MCP Server are Resources and Tools.

- Resources: This is the context or data the LLM needs to answer a question intelligently. When a user asks an LLM to explain a local code issue, the IDE (Host) provides the code file as a Resource via the Client. The LLM can then analyze the file without the code ever leaving the user’s local environment.

- Tools: These are the functions or actions the AI can execute. Tools can be simple (like running a calculator) or complex (like cloning a Git repository, compiling a program, or updating a database record). The MCP standardizes the function calling structure, making any external tool instantly callable by any LLM.

2. Elicitation: Asking the Human for Help

Sometimes, an AI agent is midway through a complex task and realizes it is missing a crucial piece of information that only the user can provide. Elicitation is the capability that standardizes this mid-workflow human intervention.

- Example: An AI agent is asked to “Commit the new code.” The Git Server (MCP Server) recognizes the request but doesn’t know which branch to commit to.

- Elicitation Action: The Server requests the Client to ask the user, “Which branch should I commit the code to?”

- This seamless, structured request-for-information is key to building complex, interactive workflows that don’t fail due to ambiguity.

3. Sampling: Recursive AI Interaction

Sampling is a powerful capability that allows an MCP Server to request that the LLM (residing in the Host) perform an additional, specialized task during the Server’s operation.

- Example: A Code Review Server (MCP Server) identifies a bug and needs the LLM to generate a quick summary of the previous 10 commits to provide better context for the fix.

- Sampling Action: The Server uses the Sampling capability to ask the Host’s LLM to generate the commit summary.

- This enables the creation of recursive, multi-step agents where the external system and the language model work together in a tightly integrated loop, all while keeping the LLM protected within the user’s control environment.

Security, Trust, and User Control—The Ethical Core

The Model Context Protocol’s founders understood that connecting an all-powerful AI to a user’s sensitive data and actionable tools creates monumental risks. Therefore, security and explicit user consent are built directly into the specification.

1. User Consent and Control

The MCP Client acts as the ultimate gatekeeper. Before an AI agent is allowed to access any external resource or execute any external tool, the Client MUST prompt the user for permission.

This critical step ensures:

- Non-Surprise: The user is aware that the AI is transitioning from mere conversation to a real-world action.

- Accountability: The user retains final authority over what data is accessed and what actions are taken.

2. Roots: Defining Data Boundaries

The Roots capability addresses the fundamental security need for data compartmentalization. When an AI is given access to a file system, it must not be given access to the entire system.

- Boundary Definition: The Client explicitly defines the secure boundary—the “root”—that the Server is allowed to operate within. For a code review, the Root might be

/user/documents/project-alpha/. The Git Server cannot access anything outside this folder, providing a strong security guarantee against unauthorized data exfiltration or malicious code execution across the wider system. - This principle of Least Privilege is foundational to the secure design of agentic AI.

3. Tool Safety and Untrusted Systems

The protocol emphasizes that all actions executed by an external Tool via an MCP Server must be treated with caution. The risk of prompt injection or an external server misrepresenting its capabilities means security is an ongoing priority. The MCP framework encourages developers to:

- Isolate Tool Execution: Run external tools in secure, sandboxed environments.

- Scrutinize Tool Descriptions: Treat descriptions of external tool behavior (metadata) as untrusted unless they come from a known, trusted source.

Conclusion: The New Era of Context-Aware AI

The Model Context Protocol is more than a technical specification; it is the infrastructure necessary to realize the promise of truly intelligent, actionable, and secure AI agents. By standardizing the communication layer, the MCP reduces complexity, increases the model’s utility (reducing hallucinations and providing real-time data), and, most importantly, embeds explicit user consent and security boundaries at every step of the workflow.

The immediate future of AI lies not in building the next biggest LLM, but in mastering the connections that allow existing models to interact with the world reliably. MCP has quickly become the industry consensus for achieving this goal. For developers, this means faster integration and more capable products. For users, it means an AI assistant that finally knows the real-time context of your work, making it an indispensable partner rather than a clever chatbot.

The era of the isolated LLM is over. The era of the Context-Aware AI Agent is here.

Usefull Link

Watch On Youtube

SHARE